Data Security Overview

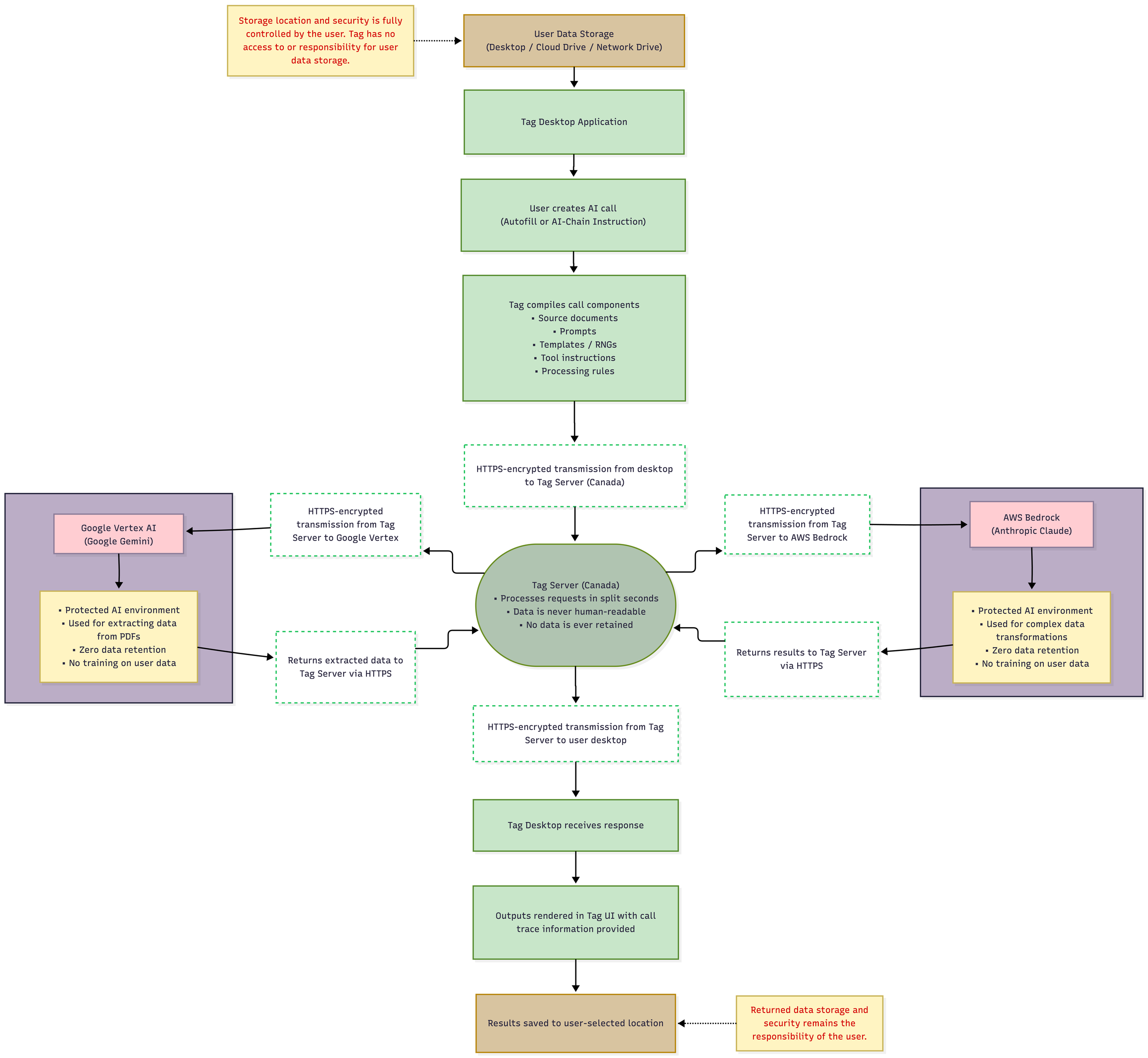

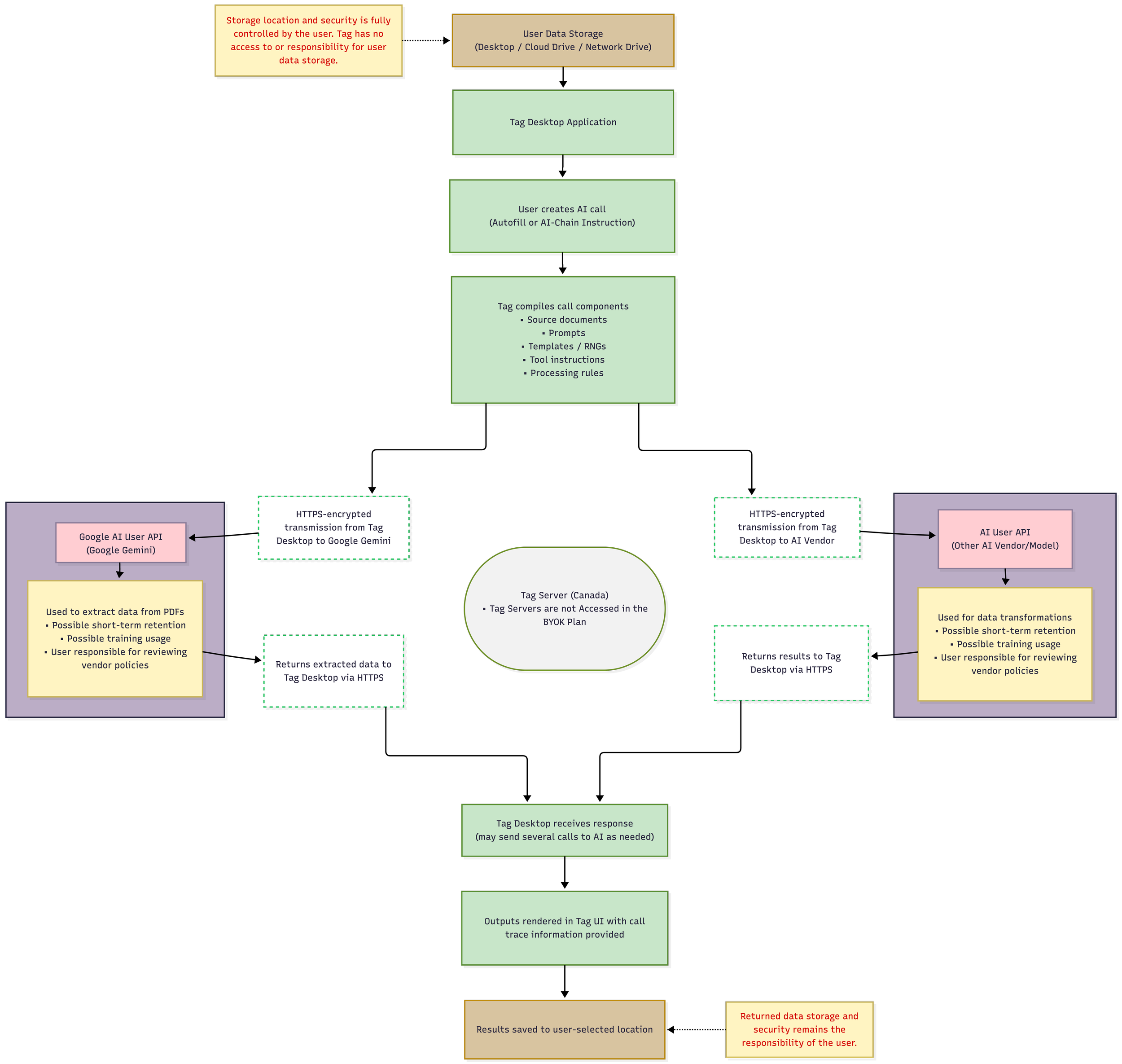

Data flow is handled differently depending on your plan.

The Managed Plan routes data through Tag servers to secure AI environments in Google Vertex and Amazon Bedrock, ensuring it is never retained or used for training, making it especially well-suited for Protected Health Information (PHI) and more aligned with HIPAA and PIPEDA requirements.

With the BYOK (bring your own key) Plan, you connect directly to your chosen AI providers, following their data security and retention policies, while bypassing Tag servers altogether.

In both plans, data is encrypted in transit using HTTPS. Requests may wait in an encrypted processing queue, particularly for larger workloads in “economy mode,” but are ultimately processed quickly and immediately re-encrypted, ensuring the data is never human-readable.

Managed Plan Data Flow

BYOK (bring your own key) Plan Data Flow

We DO NOT use Autonomous AI Agents!

Why We Don’t Use Autonomous AI Agents

Tag is designed to keep the Human-at-the-Wheel at all times. All AI-assisted processes are initiated, supervised, and verified by users. We do not use autonomous AI agents that can act independently within your system.

AI agents are typically systems that plan and execute actions on their own. While compelling in theory, they introduce real risks in practice, such as misinterpreted intent, unintended action chains, unauthorized access to files, and other unpredictable behavior. In environments handling sensitive or professional data, these risks are not acceptable.

In Tag, AI may assist with data extraction or generating content, but it never initiates actions on your computer. Any desktop operation (such as accessing files in your Windows File Navigator or Mac Finder, or opening a document in a word processor or PDF viewer) is triggered by you clicking a button or menu. That click executes explicit, traditional Java code to perform the action. The AI is not involved in these actions, and does not decide what to open, move, or modify on your computer.

This design ensures that humans remain fully in control of their desktop environment at all times. Actions are intentional, reflecting our belief that responsible AI should support human judgment and not replace it.

Data Security Matters to Us!

Why Data Security Matters So Much to Us

Data security is not a feature we added later, it is foundational to why Tag exists. The two co-founders bring complementary backgrounds that place privacy, confidentiality, and security at the center of every architectural decision. One founder is a clinical psychologist, professionally trained in the ethical, legal, and practical requirements of protecting sensitive mental health information, where confidentiality is not optional and trust is paramount. The other founder is a senior technical architect, who has designed secure government applications and conducted formal security reviews for enterprise clients. Together, these perspectives shape a platform built to safeguard information with the same seriousness expected in healthcare and government.

We are deeply aware of the growing concern around how data is collected, indexed, and reused, particularly in an AI landscape increasingly dominated by a small number of powerful vendors. Your information should not become fuel for marketing models, secondary monetization, or opaque future uses. Tag is deliberately designed so that your data remains yours. There is zero data retention on Tag servers; data storage is controlled by you. We do not train models on your prompts or documents. All data is encrypted, and the system is structured to minimize exposure at every stage of processing.

Just as importantly, Tag avoids vendor lock-in. You choose your underlying providers so that no single company controls your workflows, your data, or your future options. This approach reflects our belief that responsible AI should serve professionals and their clients, not concentrate power, extract value without consent, or erode trust. Our architecture is built in the best interests of our customers and their clients or patients, with security and privacy treated as ethical obligations, not trade-offs.

Common Security Questions

-

No. Tag is an AI-assisted tool where you maintain complete control. Unlike AI agents that can autonomously access files, execute commands, or make decisions, Tag's AI features only process data you explicitly provide and require your approval for all actions.

-

No. All file access occurs through standard user-initiated operations. AI never independently browses, opens, or accesses files on your system.

-

AI-Chains are pre-configured combinations of context, prompts, and settings that process data you provide. They're deterministic workflows you control. AI agents, by contrast, can independently decide what actions to take, what files to access, and what tools to use - creating security and unpredictability concerns.

-

Public AI chatbots are consumer tools designed for general use, and using them for professional documentation, especially in healthcare, education, or other professions with protected information, introduces serious data security and privacy risks.

When you type a prompt into a public chatbot, that content may be retained by the provider on their servers for extended periods. Many providers reserve the right to use your prompts and the responses generated to further train their AI models. Prompts and conversations may also be reviewed by human staff for quality and safety purposes.

There is typically no protection for personally identifiable information (PII) or protected health information (PHI) that you include in a prompt in a public chatbot. If you paste in a patient name, a student's details, or confidential organizational data to get help drafting a document, that information may be stored, indexed, and associated with your query in ways entirely outside your control. Some providers have experienced data incidents where chat histories were exposed to other users entirely.

For professional documentation in regulated industries, this matters enormously. HIPAA, PIPEDA, and similar frameworks place strict obligations on how sensitive information is handled. A public chatbot offers no Business Associate Agreement, no audit trail, no access controls, and no guarantees about where your data goes after you hit send.

In contrast, Tag's infrastructure is purpose-built for professional documentation including user access controls and a built-in audit trail so you always know exactly where your data goes after you hit send (see data flow diagrams above). Our Managed Plan also includes BAAs with the AI vendors we use that guarantee zero data retention and no training on your data.

Using the right tool from the start is the simplest compliance decision you can make.